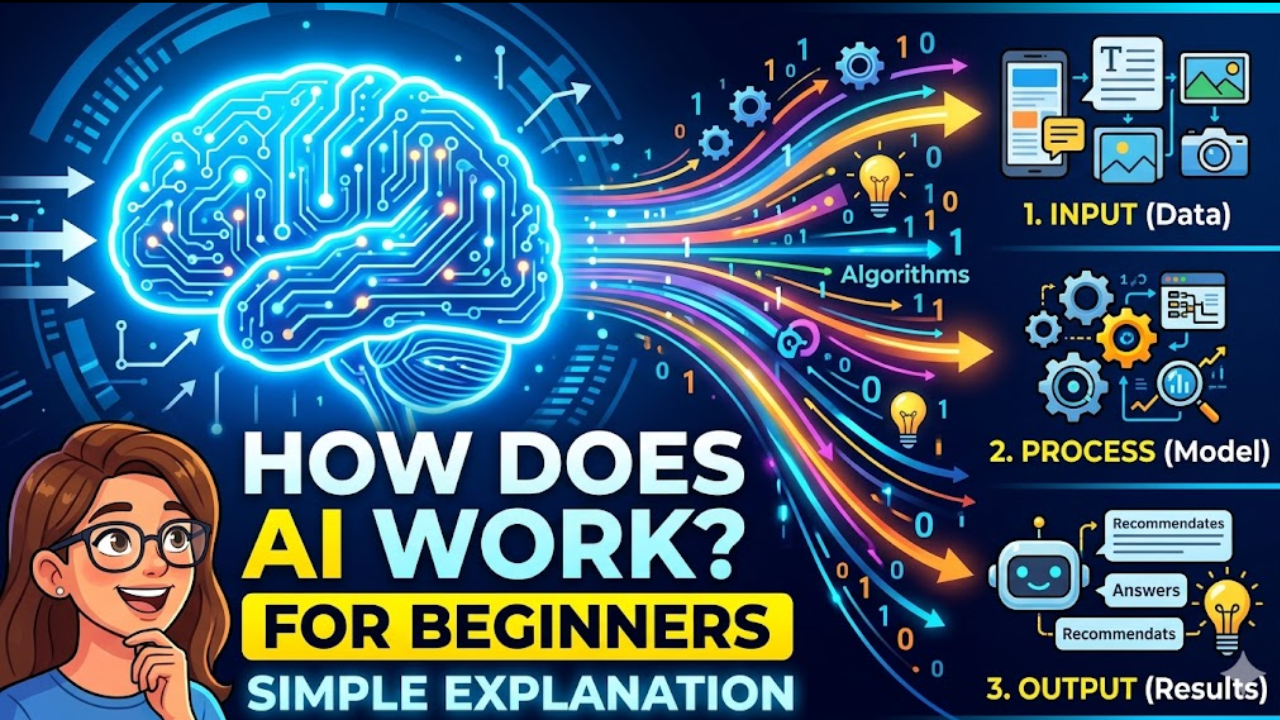

How Does AI Work? A Simple Explanation for Beginners (2026)

Artificial intelligence is reshaping the world — but how does it actually work under the hood? No jargon, no fluff. Here is a clear, plain-English breakdown anyone can understand.

By Olasunkanmi Adeniyi | Updated April 22, 2026⏱ 11 min read2,400 words Beginner · Intermediate

⚡ Quick Answer

AI works by learning patterns from large amounts of data. Rather than being programmed with rigid rules, an AI system is shown millions of examples — images, text, numbers — and gradually adjusts its internal settings until it can make accurate predictions, recognise objects, answer questions, or generate content on its own.

The core technique behind most modern AI is called machine learning, and a specialised form of it — deep learning — powers everything from voice assistants to ChatGPT.

Read Also: How to Stop Hitting Claude’s Usage Limits: 11 Rules That Actually Work – AI Discoveries

You have probably heard the word “artificial intelligence” hundreds of times. You might use AI every day — when your phone unlocks with your face, when Netflix recommends a show, or when you type a query into a search engine. But how does it actually work? What is happening inside that black box?

This guide strips away the hype and walks you through the core ideas, step by step, using everyday analogies. By the end, you will have a solid mental model of AI that will help you understand the news, make better decisions about technology, and ask sharper questions.

Key Takeaways

- AI learns from data rather than following hand-written rules.

- Machine learning and deep learning are the main techniques powering modern AI.

- Neural networks are loosely inspired by how neurons connect in the brain.

- Training is the process of adjusting an AI’s internal settings until it performs well.

- AI can be narrow (good at one thing) or general (a long-term research goal).

- No current AI system truly “understands” the world the way humans do.

1. What Is Artificial Intelligence?

Artificial intelligence is the field of computer science focused on building systems that can perform tasks that would normally require human intelligence. These tasks include recognising speech, understanding written text, making decisions, translating languages, generating images, and much more.

The term was coined in 1956 by John McCarthy, who organised the first academic conference on the topic at Dartmouth College. In those early decades, AI researchers hoped to manually program intelligence into machines — writing explicit rules for every possible situation. This approach, called symbolic AI or rule-based AI, worked well in narrow domains but collapsed whenever the real world became messier than the rules anticipated.

The breakthrough that changed everything was a shift in philosophy: instead of telling a machine how to think, what if we showed it enough examples and let it figure out the patterns itself?

That shift is called machine learning, and it is the engine behind virtually every AI system you interact with today.

“Rather than programming a computer to follow rules, machine learning lets computers discover the rules themselves — by studying data.”

2. Machine Learning: Teaching by Example

Think about how a child learns to recognise a cat. Nobody hands the child a formal definition — “a cat is a four-legged mammal with retractable claws and vertical-slit pupils.” Instead, the child sees hundreds of cats, hears the word “cat” associated with them, and gradually builds an internal model of what a cat looks like. Eventually they can spot a cat they have never seen before.

Machine learning works in much the same way. Instead of a child, you have a mathematical model — a set of equations with adjustable internal numbers called parameters or weights. Instead of experience, you have a dataset — perhaps one million labelled images, where each image is tagged “cat” or “not a cat.”

The model looks at an image, makes a guess, and then compares its guess to the correct answer. If it was wrong, its weights are nudged slightly in the direction that would have produced the right answer. Repeat this process millions of times, across millions of examples, and the model’s parameters gradually converge on values that allow it to classify cats accurately — including cats it has never seen.

The three main types of machine learning

| Type | How it learns | Real-world example |

|---|---|---|

| Supervised learning | Learns from labelled examples (input + correct answer) | Spam email filters, image classifiers, medical diagnosis |

| Unsupervised learning | Finds hidden patterns in unlabelled data | Customer segmentation, anomaly detection, topic modelling |

| Reinforcement learning | Learns by trial and error, rewarded for good outcomes | Chess engines, robot navigation, recommendation systems |

Most of the AI you encounter every day — search engines, voice assistants, image recognition — is built on supervised learning, because it tends to produce the most reliable results when you have large, labelled datasets.

3. Neural Networks: The Architecture Behind Modern AI

The specific mathematical model that powers most cutting-edge AI is called a neural network. The name is inspired by biology — the human brain contains roughly 86 billion neurons, each connected to thousands of others. Neural networks borrow this idea: they are made of layers of interconnected mathematical units, called artificial neurons or nodes, that pass signals to each other.

Read Also: 10 AI Prompts Every Nigerian Business Owner Needs Right Now! – AI Discoveries

How a neural network processes information

- 1 Input layer Raw data enters the network. For an image, this could be the pixel values — one number per pixel.

- 2 Hidden layers Multiple intermediate layers transform the input. Early layers might detect edges; deeper layers detect shapes; even deeper layers detect objects. Each layer extracts progressively more abstract features.

- 3 Output layer The final layer produces an answer — a probability that this image shows a cat, a translated sentence, or a predicted next word.

- 4 Backpropagation After comparing the output to the correct answer, an algorithm called backpropagation traces backwards through the network and adjusts every weight by a tiny amount. Repeat for millions of examples.

Networks with many hidden layers are called deep neural networks, and the process of training them is called deep learning. The word “deep” simply refers to the depth of these layers — some modern models have dozens or even hundreds of them.

🧠 Analogy

Imagine a relay race where each runner does a small transformation — adding or removing information — before passing the baton to the next. By the time the baton reaches the finish line, it has been processed so many times that what started as raw pixel data has become a clear verdict: “95% confident this is a cat.”

4. Training: How AI Gets Smarter

Training is the single most important — and most resource-intensive — step in building an AI system. It is essentially the period during which the model learns.

During training, the model sees a huge number of examples from a training dataset and iteratively adjusts its weights to minimise a measurement called the loss — a number that reflects how wrong the model’s predictions are. The algorithm that performs these adjustments is called gradient descent, and its goal is to find the combination of weights that produces the lowest possible loss.

Modern large AI models — the kind that power tools like ChatGPT, Gemini, or Claude — are trained on staggering quantities of text scraped from the internet, books, scientific papers, and other sources. This allows them to develop a broad, flexible understanding of language and knowledge. The training process for these models can run for weeks or months on thousands of specialised computer chips called GPUs (Graphics Processing Units) or TPUs (Tensor Processing Units), consuming enormous amounts of electricity.

What happens after training?

Once a model is trained, its weights are fixed. The model is then deployed — made available for users to send inputs (called prompts) and receive outputs. For a language model, this means you type a question and the model generates a response, predicting the most plausible next words given the entire context of the conversation. No further learning happens during this phase unless the model is retrained or fine-tuned.

5. Large Language Models: How ChatGPT and Claude Actually Work

Large language models (LLMs) are a category of deep learning model specifically designed to understand and generate text. They are trained on vast text corpora and have billions — sometimes hundreds of billions — of parameters.

The key innovation behind modern LLMs is a neural network architecture called the Transformer, introduced by Google researchers in 2017. Transformers use a mechanism called self-attention, which allows the model to weigh the relevance of every word in a sentence against every other word simultaneously. This makes them far better at understanding context and long-range dependencies in language than earlier models.

When you type a message to an LLM, it does not look up your question in a database. It processes your text token by token and generates a response by predicting the most statistically likely continuation — drawing on everything it absorbed during training. This is why LLMs can answer questions, write code, summarise documents, and hold conversations, but also why they can confidently say things that are factually wrong: they are optimised for plausibility, not truth.

“An LLM does not retrieve answers — it generates them, word by word, based on patterns learned from billions of documents.”

6. Narrow AI vs. General AI vs. Superintelligence

Not all AI is created equal. Researchers distinguish between three broad categories based on capability:

| Category | What it means | Current status |

|---|---|---|

| Narrow AI (ANI) | Excels at one specific task; cannot generalise beyond it | Exists today — every AI product you use is narrow AI |

| General AI (AGI) | Human-level reasoning and learning across any domain | Does not exist yet; active research goal; timeline debated |

| Superintelligence (ASI) | Vastly exceeds human intelligence in all respects | Theoretical; significant ethical debate surrounds its possibility |

It is important to note that despite how impressive tools like GPT-4 or Claude feel in conversation, they remain narrow AI. They are extremely sophisticated pattern matchers — extraordinarily good at language tasks — but they do not possess consciousness, genuine understanding, or common-sense reasoning the way humans do. They cannot, for instance, learn from a single example the way a child can, or plan a complex series of actions in an unfamiliar physical environment.

7. Real-World AI: Where You Already Use It

AI is not a distant future technology — it is woven into the apps and services you use every day, often invisibly:

| Product / Service | AI at work |

|---|---|

| Google Search | Natural language understanding, ranking, personalised results |

| Spotify / Netflix | Recommendation engines built on collaborative filtering and deep learning |

| Your smartphone camera | Scene recognition, face unlocking, night-mode computational photography |

| Gmail | Spam detection, smart replies, priority inbox |

| Online banking | Real-time fraud detection, anomaly detection |

| Navigation apps | Traffic prediction, route optimisation, ETA estimation |

| Healthcare diagnostics | Medical image analysis (radiology, pathology), drug discovery |

8. What AI Cannot Do (Yet)

Understanding the limits of AI is just as important as understanding its capabilities. Current AI systems:

Do not truly understand language. LLMs predict statistically plausible text. They do not “mean” what they say in any deep sense, and they can produce confident nonsense — a problem called hallucination.

Require enormous amounts of data. Humans can learn a concept from one or two examples. Most AI systems require thousands or millions. This is called data efficiency, and it remains a major research challenge.

Cannot reason reliably. While AI can perform impressively on many reasoning tasks, they tend to fail in ways that reveal they are pattern-matching rather than genuinely reasoning — making errors that no sensible human would make.

Have no persistent memory (by default). Most AI models process each conversation or input independently, with no memory of previous interactions unless specifically designed otherwise.

Can encode and amplify bias. Because AI learns from human-generated data, it can absorb and reproduce human biases around race, gender, age, and other dimensions — a significant ethical concern.

Frequently Asked Questions

How does AI work in simple terms?

AI works by learning patterns from large amounts of data. Instead of being programmed with fixed rules, an AI system is shown thousands or millions of examples and adjusts its internal settings until it can correctly identify patterns, make predictions, or generate responses on its own.

What is the difference between AI and machine learning?

Artificial intelligence is the broad concept of machines performing tasks that normally require human intelligence. Machine learning is a subset of AI — it is the specific technique by which AI systems learn from data rather than being explicitly programmed with rules.

Do AI systems actually understand what they are doing?

Not in the way humans do. AI systems are exceptionally good at recognising statistical patterns in data, but they do not have consciousness, intentions, or genuine understanding. They produce outputs that look intelligent because they have been trained on enormous amounts of human-generated information.

How long does it take to train an AI?

It depends on the size and complexity of the model. A simple image classifier might train in hours on a single computer. A large language model like GPT or Claude can take weeks or months of continuous training across thousands of specialised computer chips.

Is AI dangerous?

Like most powerful technologies, AI carries both benefits and risks. Near-term risks include bias, misinformation, job displacement, and misuse. Longer-term risks — such as highly capable AI systems with misaligned goals — are the subject of serious academic and policy debate. Most experts agree that thoughtful governance and safety research are essential.

What programming language is AI written in?

The vast majority of AI and machine learning code is written in Python, largely because of its rich ecosystem of libraries such as TensorFlow, PyTorch, and scikit-learn. The underlying computations, however, often run in highly optimised C++ or CUDA code for speed.

The Bottom Line

Artificial intelligence is, at its core, a very powerful form of pattern recognition. It learns from data, adjusts its internal settings through training, and produces outputs that look remarkably human — sometimes indistinguishably so.

It is neither magic nor the all-knowing robot of science fiction. It is a sophisticated mathematical tool that reflects the data it was trained on and the goals it was optimised for. Understanding how it works — even at this conceptual level — is one of the most valuable intellectual skills you can develop in the decade ahead.

The more you understand AI, the better equipped you are to use it critically, question its outputs, shape how it is built and governed, and — if you choose — build things with it yourself.

YN

Your Name

Technology writer and researcher covering artificial intelligence, machine learning, and their societal implications. Previously at [Publication]. Questions and pitches welcome.

Contents

- What Is Artificial Intelligence?

- Machine Learning

- Neural Networks

- Training

- Large Language Models

- Narrow vs. General AI

- Real-World AI

- What AI Cannot Do

- FAQ

- The Bottom Line

Share this article

© 2026 AI Discoveries

Last reviewed and updated April 22, 2026 · Sources: peer-reviewed AI literature, Stanford AI Index Report, DeepMind research papers

Leave a Reply